Braden with the Roi (King) of the Nice Carnival Parade, 2008, Nice, France

Let’s get this straight. Philosophers who are far more sophisticated than me, as well as neuroscientists and a list of other professions, have been working on the problem of how people think for as long as humans have been on the planet. But we need a model so we can move forward in our understanding of knowledge in social networks. And that’s what we’re talking about today — a model, simple enough to be useful.

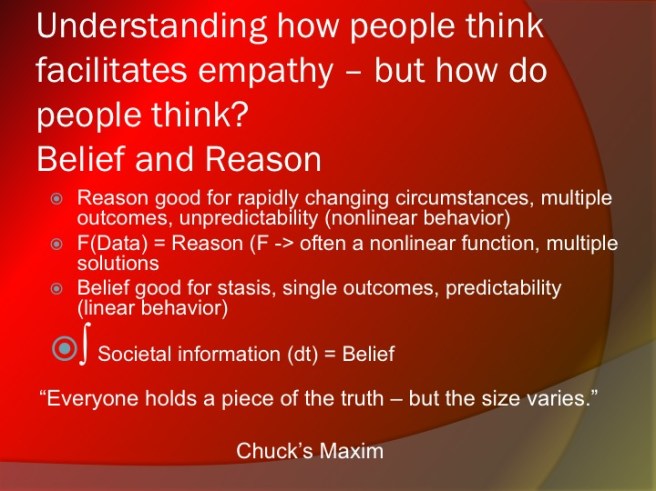

Since I like Ken Wilber, let’s start with his stuff — the idea that we can split individual human thought into a fundamental dichotomy — belief, and reason. This is a useful division. There’s only two categories (handy!), and it also maps to some basics in brain function, a la Daniel Kahneman and his book Thinking Fast and Slow. Belief is associated with the impulsive, compacted, downloaded part of the brain otherwise known as the limbic system. And reason ostensibly sits in the pre-frontal cortex — that big hunk of sophisticated gray matter that supposedly separates us from the lower beasts. Maybe!

Here’s a little slide from a PowerPoint I give to help lay it out.

We’ve made the case in the past that reason, when coupled with interactions with other people, comes along with a developed rational empathy, and that it involves taking data from a given circumstance and processing it in a complex way to come up with a conclusion. We’ve been using math paradigms in the past, and we’ll use one now — your brain takes the data and does a functional transformation that allows you to reach a conclusion. It may take a little while, brain-wise — this is Kahneman’s slow thinking after all. But it knocks around in your frontal lobes, and at some point, your brain reaches a conclusion. Depending on your development, you’ve applied an algorithm, or a heuristic, or some combination of heuristics. As people say about relationships sometimes, “it’s complicated.”

Contrast that to belief. Belief is something that has been processed, either by you, or more likely, larger society. That aggregation has been in the past, and boiled down to one thing or another. It is not as dependent on the current situation, though the current situation could trigger emergence of the belief.

In mathematics, you could represent belief as an Direct Integral Function. What’s that? Basically, that’s a long sum of information, all added up and normalized in some way or another, where the more complex nature of the information is averaged out. You can’t know exactly how a belief came into being, because while there may be some obvious factors, there are also some less obvious factors involved whose independent detail have been lost in that averaging process. Just like a single number — an average, for example — doesn’t tell you about the shape of the probability distribution that the average represents, a belief can’t really tell you about all the data that went into it.

And that’s important — because when someone tells you “well, the reason for the belief is…” you know they’re full of it. Belief, as some meta-integral of a complex of data, can’t be explained solely through reason. There’s other stuff buried in there.

That doesn’t mean that beliefs aren’t good things. One of my favorite examples is asking people what they do when they cross the street. Inevitably, they say “look both ways.” That’s been pounded into their head by mother and father since they were four. I’ll then ask them “well, what if it’s a one-way street?”

Why do we look both ways? And why is this particular belief useful? It arms us with a short-time, semi-automatic response that keeps us from getting run over. Imagine if we took a more rational approach toward cross the street. We’d approach the street, evaluate whether the street was one-way or two-way, and then make a decision to collect data. We might consider the speed limit, and road condition. Then we’d have to sit down and scratch our head. By the time we finally got across the street, we’d be late for our meeting. Or we might get hit by a car.

Belief is also interesting in that it’s fundamentally binary in nature. Either you believe something, or you don’t. It should be no surprise that belief maps to the lower levels of the Spiral as the dominant mode of thinking. As an Authoritarian, you either believe something or you don’t, depending on who they are and their status. Legalistic v-Meme right/wrong corresponds to the concepts of rules as well — you either violate them or keep them. No questions asked.

Reason becomes a more complex beast. Reason at its beginnings starts in the Legalistic v-Meme, with logical processes that require data particular to a situation. Decision logic comes into play — if/then lets us separate different cases, and handle a wider variety of situations. As we introduce more variability due to independent agency, we get a broader, probabilistic flavor to our reasoning, and we start to see the emergence of heuristics. It just gets more complex from there.

We can, however, with a little help from our friend, Ken Wilber, place this into a system of evolving thought. That structure is in the Powerpoint Slide below:

One can see a rough mapping easily between these levels and the Spiral. Magical maps to Tribal/Magical v-Meme organization (in terms of time scales, we get short-time/long-time dynamics). Mythical certainly mixes in there, as well as moving on up into belief-based Authoritarianism. In our own society, we see a lot of Mythical thinking around the United States Constitution, as an example. But that’s not the only place. Most faculty are largely Mythical/Rational. Ask any university prof why they teach a particular class a certain way, they’ll be quick to tell you that it’s the way their graduate advisor did it. Graduate school — now THAT was a mythical time!

Rational thinking — people like the idea of rationality, and to be sure, it’s a data driven ladder to better things. But few people consider the downsides of rationality — it’s dependence on how good the data actually is. You can use a rational-algorithmic, or rational-heuristic process. But it’s Garbage In/Garbage Out — your decision is only going to be as good as both the process AND the data.

Multiple perspectives/Plural thinking map well to Communitarianism — everyone has an opinion, and everyone should be included in part of the process. Until the process just doesn’t work any more. So it’s not perfect, either. One can start seeing that there are some fundamental information theoretic/thermodynamic balances at work between belief and reason. If you don’t have enough time, space and energetics in your data or in your beliefs, no matter where you are in Wilber’s hierarchy, you’re not going to make good decisions.

Last up is Integral Thought — the idea that you can reach the truth, but it is hard, and it takes a long time. Here’s an insight into this — why Servant Leadership 2.0, or advanced design practice all offer pathways to Integral Thought. You have to be self-reflective, and consider your own bias — especially confirmation bias. You can’t get to an objective truth until you can see how you’re part of the larger system that generates it. And if you’re a big part of it, Heisenberg’s Uncertainty Principle rears its head — the very act of observing and potentially interacting changes the truth itself. As a manager, it’s something to remember.

The last part of understanding belief and reason, as well as their structure in Wilber’s Thought Hierarchy, is understanding who can understand what. Someone operating lower on the hierarchy simply can’t understand thoughts constructed higher on the hierarchy. What is the implication of this? Let’s say you’re arguing with someone who is a Bible Literalist — that means that they view every sentence in the Bible as an absolute truth. You approach an argument with that person that you’re going to change their mind. You present data, what you feel is proof that the Bible has been translated from the original Hebrew. They don’t buy it. You try, and try, dredging up information, photos and such. They won’t budge. Why?

This is a classic example of clash of levels on the Thought Hierarchy. The Bible Literalist is likely operating from at a maximum a Mythical framework, and they may even believe in a Magical perspective, where they believe the Bible issues exact dates for various events, such as the Apocalypse. What is important is to understand how they view your argument. The short answer is that it is only a representation of your belief — that the only objective truth, in their mind, is that you can only argue FOR your beliefs. How about that for an Authoritarian/Egocentric v-Meme mapping?

The same principle operates up the Hierarchy. Someone who is a Plural thinker is going to have problems with an Integral thinker — you don’t get out of the frame until you become self-aware. So, if the person who you’re arguing with isn’t, you’re stuck. Or maybe you’re stuck.

It’s not hard to take Wilber’s Thought Hierarchy and map this back to the whole series on differential v-Meme conflict that I wrote here. People at different v-Meme levels not only do not have the same structure and synergy in their knowledge levels. They literally are not using the same parts of their brain to communicate with each other. And at the lower levels, their ability to connect and trust are also radically diminished — so they have absolutely no reason to listen to anyone else that doesn’t match their thoughts. The necessary neural patterning is just not there.

One of the interesting things I’ve noticed as a teacher is that a majority of young people have a leg up in this situation because of their inherent neural plasticity. If they’ve been properly prepared, they literally can wrap their heads around thoughts (through a combination of mirroring behavior and general receptivity) that older people will struggle with. And more importantly, if they’ve been patterned successfully with a variety of complex algorithmic thinking processes, even though they core-dump specific knowledge after any given test, they can rapidly re-assimilate it if needed. One of the things about students receiving a B.S. in engineering, which emphasizes complexity and sophistication of algorithmic thought compared to a more skill-based degree, is that they have this capacity. Many a plant manager has told me “I never expect them to know anything. But they are just different, and pick things up faster.” I’d argue that this isn’t just a result of sorting out ‘smarter’ kids with advanced degrees. It’s actually a function of Wilber’s Thought Hierarchy in action — and the incumbent linkage to more sophisticated social structures that students are required to have.

Takeaways: Pay attention to the arguments people use, and you can usually pretty quickly figure out if they are working on principles of belief or reason. But pay attention to the way you think as well. Getting to truth is hard for any one person — and it’s likely if you want to develop a real Integral perspective, you’re going to have to work on your empathy as well. It’s a never-ending process.

A pattern I’ve noticed a lot in my workplace has a way of starting at pluralistic thought, and then devolving (or defaulting) back down to belief based thought: there’s an opportunity for a new product -> lots of new, divergent ideas are generated (plural) -> but we don’t have data, or the resources to collect data, in order to vet any of the new ideas (rational) -> so we’ll just do what we’ve always done, since we know it works (mythical). Which is understandable a lot of the time, since if you don’t have the resources to vet a new idea, you’d be taking a large risk to go down that road.

And I think this is why all of the hip software development companies have better luck with higher level decision making structures, like the agile process you mentioned recently. If you’re making a software prototype, it doesn’t cost anything more than the time (and opportunity cost) it takes to design the code. Compare that with traditional manufacturing companies where prototyping involves not just design, but also material purchasing ($), vendor selections, machine time ($), assembly, and finally testing. Factor in shipping and lead times and the whole process can take months just to get a proof of concept built. Makes me jealous of those guys who can just hit F7 to see if something works.

So correct me if I’m misunderstanding this, but is that the essence of the “hard to get to” part of integral reasoning — that it requires resources to pick through the multiple solutions arrived at with pluralistic thinking? Is there a way to make use of pluralistic reasoning without defaulting back to belief modes in the absence of existing data or big pocket books?

LikeLike

Ryan — there’s a whole post in your comment. Here’s the short version — it ain’t communitarianism if they take the pie and parcel it into tiny pieces. That’s just a modified form of authoritarianism.

I think your comment on why it’s easier to do it in software is also true — goes back to the time/space/energetics principles I talk about. Money is a great measure of energetics, and as such, there’s definitely a lower Gibbs Free Energy requirement in software. Plus, one of the things I have discussed (hasn’t made it to the blog) is that when you make something like a rocket, you can’t just upload a new engine mid-flight if there is a malfunction. Contrast that to Microsoft Word. Systems that design rockets have to be much more heavily scaffolded in the lower v-Memes (which is why you have to have expert hierarchies). The challenge is always enough self-awareness in these systems of these facts so that you still have creativity. If you go back in the blog and read about Algorithmic Design, I discuss this.

Hope this helps. I’m going to write up a post on faux-Communitarianism. And remember — once you get to a higher level, you’ve got all those other things nested inside of you. So it’s no surprise that in the face of higher challenge (or too much challenge) things devolve back down to safety pretty quickly. Even if your company is big on sharing information and connection for most things.

LikeLike

This made me think of Jordan Peterson and Sam Harris’s podcast debate on “what is true”.

Peterson proposes that scientific truth is “nested within a [Darwinian] moral framework”, which implies that there may exist facts which are true, but are “not true enough” since they’ve proven adversarial to survival. Which would almost argue that integral reasoning can be nested in a moral (mythological) framework. Vs, Sam Harris argument that scientific truth is not concerned with morality in any way.

Was a long arduous conversation, but still interesting enough 🙂

LikeLike