White Sand Lake, Selway-Bitterroot Wilderness, Clearwater NF, Idaho

A friend posted a blurb on the Dunning-Kruger effect a day or so ago on Facebook. It’s political, and not very insightful, so I won’t re-post. But it made me revisit my own writings on the subject, and realize that I hadn’t done a very good job of discussing the concept, which is very important for understanding information dynamics inside organizations. Dunning-Kruger can be a powerful tool for ridicule of audiences you don’t like, as you’ll see when I get done explaining it. But the work that David Dunning and Justin Kruger, both professors at Cornell University, completed in 1999, has tremendous ramifications for understanding knowledge transfer inside business organizations, as well as current societal trajectories.

What’s the basic idea behind Dunning-Kruger? It’s this: people who are relatively unskilled in a particular area will rank their abilities much higher in that area than those with more skill and talent. In the language of this blog, it means that their metacognition is low — the things that they know they don’t know, or the things that they don’t know they don’t know (or aren’t remotely aware of) are few. They’re basically not aware that they’re dumb.

But there are flip-side corollaries to Dunning-Kruger involving people with demonstrated expertise. Experts, when asked how their knowledge stacks up relative to others, will often assume that other people know a lot that the experts know, and will project their comprehension of specific expertise onto others — a direct manifestation of the Authoritarian/Egocentric v-Meme, no matter how ostensibly humble or well-meaning.

As a teacher, I can personally vouch that this is an enormous problem for me as I’ve aged. You teach the students in one class something, and then the next class comes along — fresh, brightly scrubbed faces that look very much like the last bunch — and you assume that surely they must know what you just taught the previous class! For me, each semester, I sit down and work out hitting the reset button in my own head so I minimize this effect.

Here’s the point — that’s also Dunning-Kruger. It’s not just about other people whom you think are dumb. It’s also about all of us, at some level. At times, we’re so dumb, we’re unaware how dumb we are. And at other times, we’re so smart (or learned) we don’t know how smart we are. This lack of metacognitive perception is a two-edged sword.

There’s a famous funny story in the academy, where a math professor is doing an elaborate proof of a particular theorem on the board. He comes up to a spot, where he turns to the class after writing down a particularly complex part of the proof, and says ‘Of course, it’s obvious…’ He then looks at it, exits the room for 10 minutes, then comes back and says, ‘Of course, it’s obvious’ and continues writing! That’s classic Dunning-Kruger.

(As a side note, I committed Dunning-Kruger on my original mention of Dunning-Kruger in my blog, back in July. I said something to the effect of ‘I wrote about Dunning-Kruger and surely you all are familiar with it! 🙂

For those that remember the posts about metacognition, the magnitude of the Dunning-Kruger effect on an organization is powerfully affected by the social/relational structure. If you’re in a status-based, Authoritarian organization, where discussing the organization’s problems is strictly verboten, and pointing out areas where the organization (or leadership) lack knowledge results in punishment, it doesn’t take long for metacognition to collapse. The organization becomes, a la Rogers’ Theory of Diffusion of Innovations, a home for employees full of Late Majority and Laggards — an anathema in the rapidly evolving, hi-tech information-sharing landscape of today.

And it can happen to organizations full of very smart people. Anyone failing to believe this needs to examine the rapid collapse of certain companies in the tech industry, like DEC or IBM. Microcomputers were the disruptive technology, of course. And while super-large, mainframe-esque computers still occupy a small part of the computing landscape, the organizations/customer base they service (consider the fact that IBM is still in the mega-supercomputer business), not surprisingly, match the social structures and frontier tech aspirations of those with the same design limitations.

A great example of this would be the Lawrence Livermore National Labs, the mission there for advanced understanding of exploding nuclear weapons — truly a relic technology (as well as the nuclear fusion program,) and the IBM Sequoia supercomputer that was built especially for them. It’s no surprise that these two partners (IBM and LLNL) are in the nuclear simulations game. And it’s also important NOT to discount the relative sophistication of the games these people play. But to what end? Does the world really need smaller nuclear weapons? What about the core validity of these programs? And at the same time, how else could the Legalistic/Absolutistic v-Memes allow the system behavior to emerge?

Confirmation bias also comes into play. The phenomenon, which has a history going back to Thucidydes, has been more recently defined and researched by Peter Wason, as the tendency to search for, interpret, favor, and recall information in a way that confirms one’s beliefs or hypotheses, while giving disproportionately less consideration to alternative possibilities, is also a major factor in destruction of metacognition..

What confirmation bias means in simple English is that if you believe something, a natural tendency is to go out and find more evidence that proves the thought in your head, as opposed to confronts what you believe. And it’s everywhere (warning — potential confirmation bias of confirmation bias!) The simple child’s game of spotting a Volkswagen and then punching your brother in your arm, known by my own kids as Slug-Bug, is prone to confirmation bias. They start by only punching their brother when they see a Beetle. But the game rapidly degenerates to any small car becoming a reason to beat the living daylights out of each other.

Organizations with particularly rigid belief structures (like universities!) can be extremely prone to confirmation bias destroying metacognition (and promoting Dunning-Kruger effects) as well as destroying meaningful examination of belief structures. An example of one of the biggest areas that vex me is the perspective that students are lazy and prone to cheat. I’ve found from my own experience (also prone to confirmation bias!) that a certain, measurable subset of kids cheat, and that this number doesn’t substantially change year-to-year. The ways they do it are relatively predictable as well, and if you design curriculum and evaluation around these techniques, you don’t have a problem with cheating. Yet, inevitably, there are routine outbreaks of professorial concern (and the incumbent task force!) regarding cheating, where if you stand up and voice a view similar to the one I just stated, you’re branded as a heretic. Not surprising in an institution dominated by Authoritarian and Legalistic/Absolutistic thinking that spends an inordinate amount of time on focusing on control.

Confirmation bias weighs heavily in Dunning-Kruger scenarios of self- and organizational competency assessment. People inside an insulated organization where underlings consistently confirm their boss’ approach as the correct one feed into leadership’s perception that they are on the right track. And as the worm turns, such organizations also grind out other employees that could fix the problem. Those people leave because either a.) they don’t believe the superficial beliefs, b.) don’t appreciate the workplace dynamic of working in fear for their careers, or c.) they’re fired.

One of my mentors, Al Espinosa, who worked as a fish biologist for the US Forest Service, and is one of the pioneers of using data-driven analyses for watershed health, had a term for this — the Synergistic Stooge Effect. (Al also ended up being driven out of the Forest Service.) People with the same belief structures would sit in the same room and tell themselves the same thing over and over, in denial of outside data, until the whole enterprise (and all the watersheds they were responsible for) started literally falling apart, with landslides and road collapses. Citizen lawsuits became a primary driver of reform — because the USFS’ own data showed decline, and problems became so obvious that citizens could pursue enforcement through an outside agency — the Federal Courts. Even under the reality of legal jurisprudence inside the Federal Courts that the ‘King’ (in this case, the US Government) can do no wrong. At least not until you get to Federal Appeals Court.

Al even developed a lexicon for the professionals inside the agency that facilitated the group’s Dunning Kruger behavior — lap dogs (underling authoritarians) and displacement behavior specialists (biologists inside the agency who would run away from problems and hike in the wilderness to avoid conflict) were two types. Needless to say, there are many pathologies that organizations enshrine when denial and metacognitive collapse become part of organizational culture.

It might be easy to see how people we’d consider stupid would consider themselves smarter than other people when asked to evaluate their competence. But how does the other end of Dunning-Kruger work? How do smart people consider themselves only marginally more competent than others in the general population pool?

One of the interesting areas I’ve explored is how expert thinking actually works. Often you will have high experts who reach answers that might require other, less-developed individuals extensive time to come to the same conclusion. Understanding this can be traced back to Roger Martin’s mystery => heuristic => algorithm paradigm. As we’ve discussed earlier, Martin’s paradigm maps well to the Spiral, with Mystery/Guiding Principles thought being referenced to the upper, more unknown parts, Heuristic mapping to Performance/Goal-Based behavior, and algorithmic thought mapping to Legalistic/Absolutistic social structures.

In real experts’ heads, there is what I call a v-Meme compaction of information from higher levels into lower, more impulsive parts of the brain. This turns more complex information structures from slow, reasoned thought to quick thought. The brain is an amazing thing, always looking to free up space. So if the brain can take more complex processes buttressed by hundreds of examples and create an established behavior, it will if we let it. Confirmation bias will help your brain make the decision that it ought to speed up with certain kinds of decisions — after all, the situation is the same, isn’t it? And certainly, it’s not, all in all, such a bad thing. As one rises in an organization, the expansiveness of the decision landscape keeps growing. You’ve got to figure out somewhere to put all the detritus.

It’s certainly true in hi-tech. Up to a certain point of time in the early ’80s, experts likely would recommend the next largest mainframe computer to come along as a solution to business problems. (My first microcomputer that I used when I worked in the steel mill in 1982 was a hobby-based Heath-Tecna.) And for the longest time, in all tech heads, the idea that real computing could be done by a smaller box was something that did not play well inside our own noggins, filled with our own version of confirmation bias.

Yet we all know the end to the story. Our current expectation is that computers will now get smaller, and ‘bigger is better’ has now been replaced with another belief framed by confirmation bias – ‘if it’s not shrinking, it’s lower tech.’

We all do this, at some place in our lives. We process enough similar experiences, we down-compact. When I go kayaking, when I’m getting my gear together, I always count out the Big Five — paddle, lifejacket, sprayskirt, helmet and boat. Eventually even this process down-compacts even more and I have a bag where I count all the gear going in, and going out, so I condense getting ready to boat in grabbing that one bag. It’s all there, and I can think about what kind of beer I’d like to drink.

Where it becomes a threat is when we’re not aware that we’re doing this — that this is a natural part of brain function. V-Meme Down-compaction loads information into non-empathetic, belief-based knowledge structures that are fundamentally ungrounded or self-referential, like rules or knowledge fragments. That doesn’t mean that they’re necessarily invalid — I’ll always need my five pieces of gear to go boating.

And this is where self-awareness, and empathetic connection comes in. If there isn’t an individual inspection/feedback component when I put the gear into the bag, if I rip my sprayskirt on a rock, and it goes into the bag automatically, or if a friend doesn’t remind me to check my gear when I’m leaving the house, I end up sitting on the bank the next time I want to go paddling.

Organizationally, how does empathetic connection and higher organizational modes come into play? Empathy drives coherence with others, and provides a grounding feedback loop that forces reconsideration of decisions made by experts inside your organization. Organizations with Performance/Goal-Based v-Meme structures or higher are much less likely to fall into either version of the Dunning-Kruger trap. Outside grounding — and more importantly, the receptivity to outside grounding, established by things like neutral focus groups, or regular customer visits and listening programs (this is why kaizen events with customers can be such great ideas) — can do much to cause appropriate reconsideration of this constant problem of institutional atherosclerosis.

A little empathy works even in the most dire of circumstances. There was a period where members of the Senate Democratic and Republican programs starting having a ‘lunch contact’ program. Amazingly enough, even that little bit of Tribal v-Meme empathetic connection reduced conflict.

And now we can see how this ties back to Servant Leadership 2.0 — the mindful Servant Leader. It is only with that process of empathetic connection and self-examination that we can hold those Dunning-Kruger beasts at bay. Having a leader at the top that exhibits that kind of mindfulness and need for connection is definitely going to see mirroring behavior benefits further down the chain-of-command — all anathema to Dunning-Kruger manifestation.

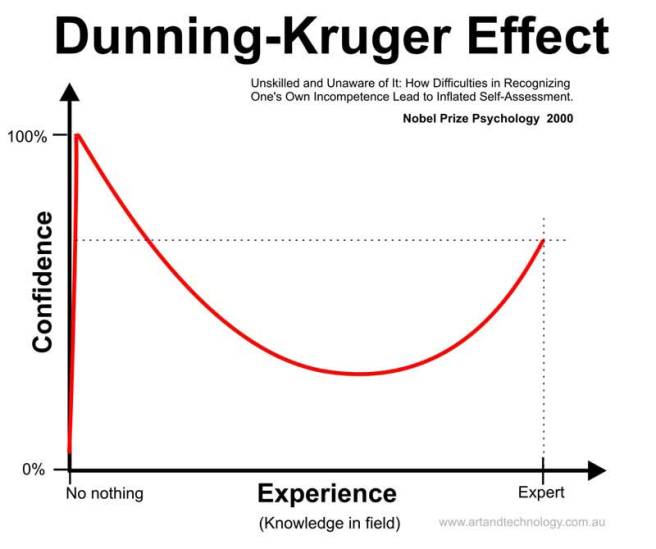

There’s a curve that shows the progression of Dunning-Kruger I’ve posted from this website below:

(Contrary to the figure’s announcement, Dunning and Kruger were not awarded the Nobel Prize in psychology (of which there is none.) Rather, they were awarded the Ig Nobel Prize, which is a parody.)

Organizational managers might like to operate in the valley — the position of maximum metacognition — but we all enjoy the benefits of colleagues who give quick, right-thinking answers as well. The real answer is that combination of self-reflection, personal mindfulness, and empathetic connection to outside audiences to ground ourselves to reality. As well as being humble when asked the question “Are you sure you know what you’re talking about?”

Takeaways: The Dunning-Kruger effect shows up on both ends of the competence spectrum, and afflicts both superbly competent and incompetent alike. Confirmation bias complicates things, because we reinforce what we know with our own observations. The two solutions are, not surprisingly, mindfulness of our own thought process, and empathetic connection with others. And it doesn’t hurt to have someone at the top beaming out these values to their employees.

Further Reading: This piece on Normalizing Deviance, about pilots who don’t follow appropriate pre-flight behavior and checklists is a great example of how v-Meme Down-compaction, as well as confirmation bias, can create a real hazard, even among expert pilots. Gotta go through that pre-flight checklist, guys — or that plane may crash. As good an example of discarding necessary Legalistic/Absolutistic algorithms to Authoritarian expert judgments as you can get. As we all know, there are often reasons behind those rules.

I have something to think about!

LikeLike

That’s why I’m here, Matt!

LikeLike

The graph at the end reminded me of a similar chart (link below) from positive psychology I came across when reading about “flow”. You have to invert the Dunning-Kruger curve so that a person’s initial high-confidence/low-skill state maps to the “low skill / low difficulty (apathy)” corner in the lower left of the flow chart. Then as a person’s confidence wains and their ability grows according to the Dunning-Kruger curve, they will progress through states of worry, anxiety, arousal; and ideally end up somewhere in the control or flow regions.

https://en.wikipedia.org/wiki/Flow_(psychology)#Conditions_for_flow

LikeLike

I’ll check it out, Rian!

LikeLike

OK — read that. Have to think a little more. But one of the interesting aspects was the autotelic personality, which definitely maps more to being performance/goal-based, and therefore, empathetically more data-driven. And therefore, internally defined — and easier to achieve a flow state.

LikeLike

Great post,

As expert metacognitive knowledge becomes autonomic, the knowledge and schemas are stored and recalled and access to cognitive memory traces as lost (Ericsson & Simon, 1984). The expert is no longer aware of their a priori knowledge. This expert blind spot (EBS) – domain specific, based on their advanced content knowledge and experience is obvious to a novice as the expert makes hidden assumptions about solutions that turn out to be in conflict with students limited experience. This is a common problem for learners within academia and industry instructors.

This is partially influenced by the organization structure (structure + behavior=culture) or as you stated “a direct manifestation of the Authoritarian/Egocentric v-Meme”

LikeLiked by 1 person

Mike — that’s a great comment. Thanks! The only disagreement I have is with your equation — I’d argue that structure+culture = behavior. I think I need to write a post on why this is the case. Culture is very interesting — an adaptation of many different ingrained v-Meme structures that create persistence in a society considering all the various physical and external influences. As such, it spans the Spiral. It’s the combo of that with structure that creates the way people will act in a given situation..

LikeLike